The executive team and investors of uber do not understand meal delivery or logistics in general.

Because they don’t understand the market in which they operate, they believe everything the ai says and does.

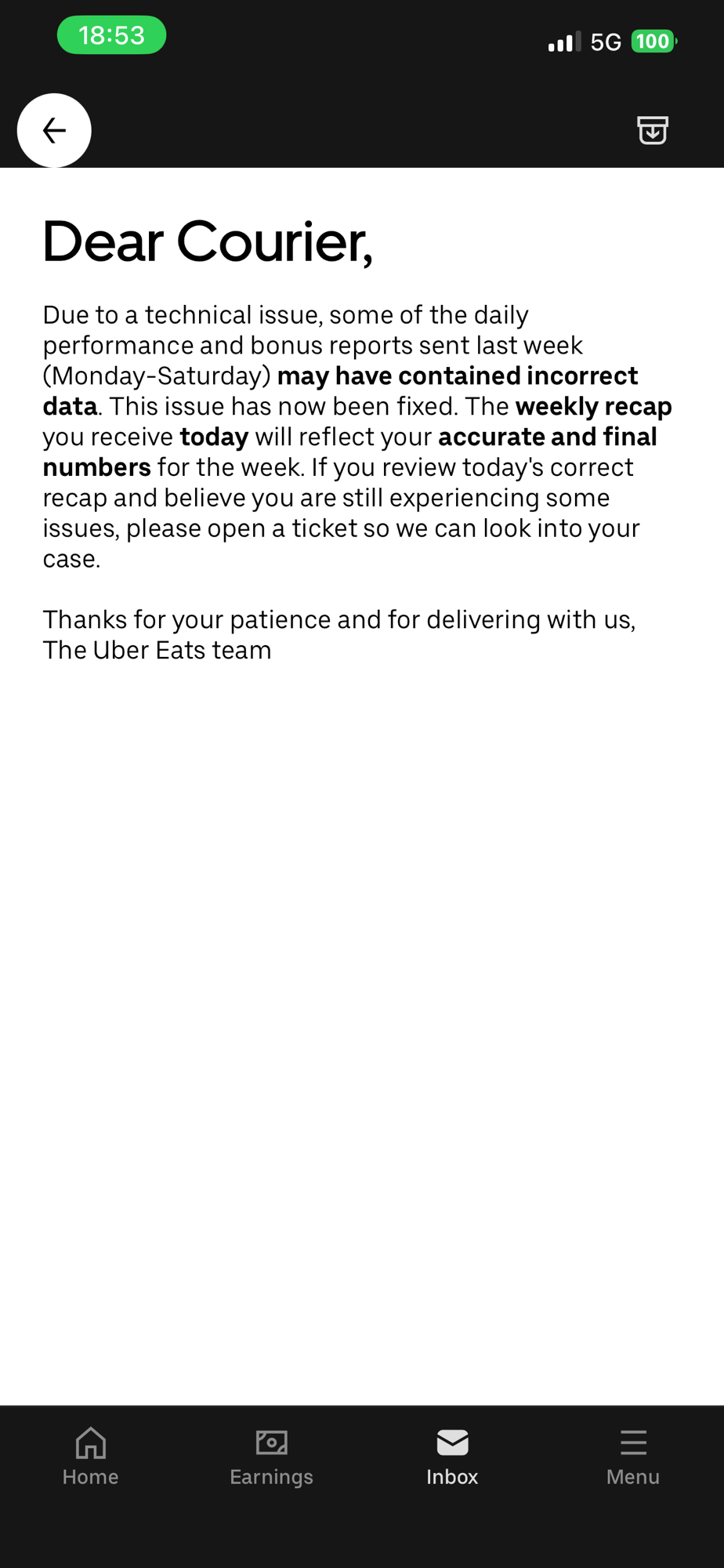

Without transparancy of statistics and without worker feedback there is no way these retards can ever be told what is going wrong.

And even then, the ai is super smart, so it can easily make the ai retards at uber believe whatever.

So uber is now in a loop of retards.

Uber never operated in good conscience in any of the markets it operates in and with ai that has become more obvious than ever.